Running AI Locally Changed How I Think About Software

What happens when you stop renting GPUs and start feeling constraints. A story about Colab bills, CUDA crashes, and why friction is the best teacher.

I kept recharging my Colab subscription until I realized I was renting a GPU I didn't even control.

The Cloud Trap

I started using Google Colab Pro with a simple goal of starting with training a binary classifier, then moving on to fine-tuning small LLMs with HuggingFace, which Colab helped make it very easy to get started because it has GPU to use through the browser, no setup, no hardware, only code, But the sessions kept dying. but the problem is that the session is always missing while I'm training the model, tweaking the hyperparameters or watching loss curves, the connection is missing so all the progress is gone, I have to re-upload and re-run everything, and then compute units run out, so I have to recharge, and then I have to start again, and I was paying for a GPU I didn't control on an unpredictable timeline.

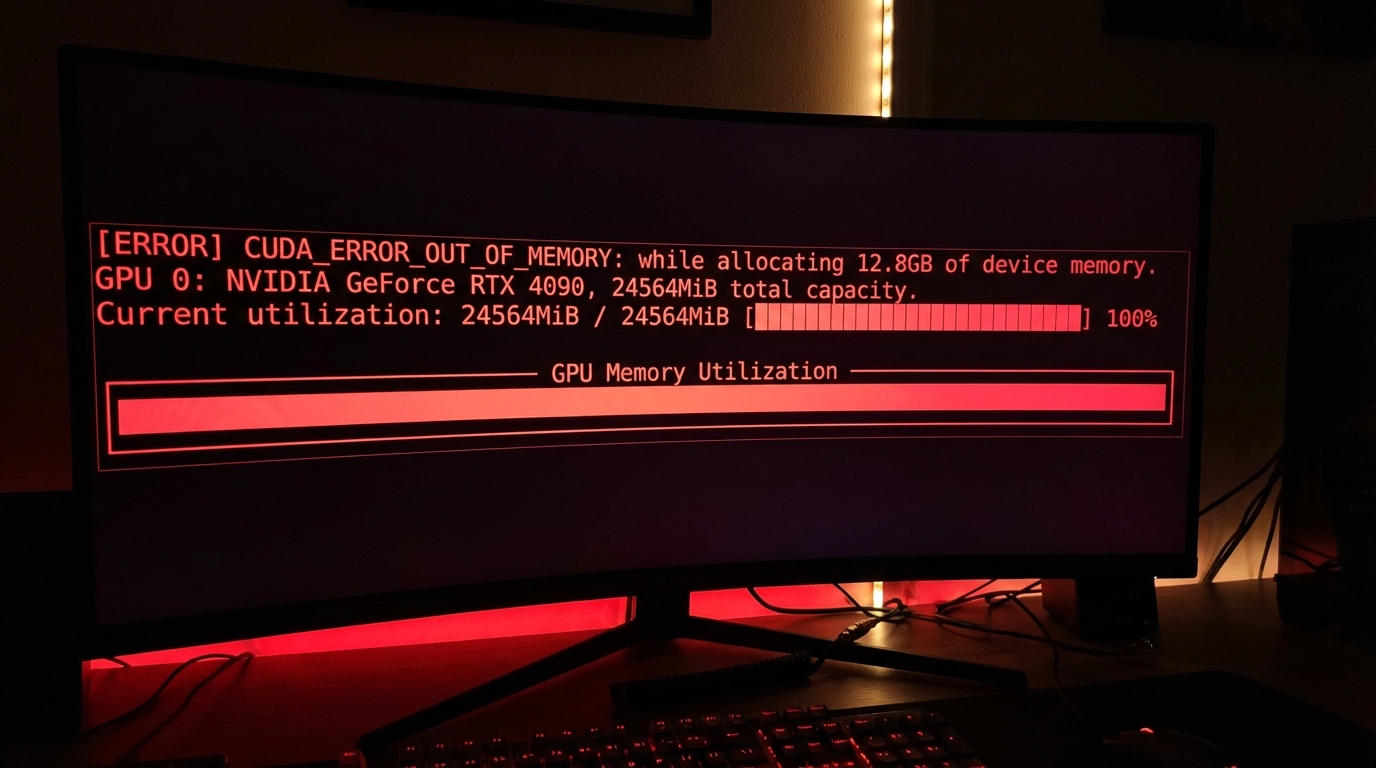

The Crash

I tried fine-tuning Mistral 7B with LoRA using the HuggingFace PEFT library.

The script started.

Tokenizer loaded.

Model loaded.

Then:

CUDA out of memory. Tried to allocate 2.14 GiB.

GPU 0 has a total capacity of 8.00 GiB...

I stared at the terminal.

Googled the error.

Every answer said the same thing:

*Reduce batch size. Use gradient checkpointing. Or get more VRAM. *

I tried the first two.

Still crashed.

That is when I understood something no tutorial had taught me:

**The model does not care about your ambition. It cares about memory. **

What the Crash Taught Me

Running locally stripped away every abstraction I had been hiding behind.

**VRAM matters more than compute. **

You can have a powerful GPU and still fail because:

- The model does not fit

- Memory fragments over time

- Multiple processes compete silently

Strict FLOPS values would be meaningless if there was no place for the tensors when an error occurs in Colab I would always blame the platform, try to restart and try again but if it's in the machine itself the error is mine the limitations are mine and there's no escape at all Constraints are teachers. When I stopped fighting the memory limits and switched to a system design to fit those limitations everything changed anyway whether it was using a smaller batch size, gradient checkpointing, quantization, and offloading each workaround taught me more than the original tutorial

I'd Learned This Before

The funny thing is - this was not new.

Years earlier, at WalletHub, I had learned the same lesson in a different domain.

We had databases, Elasticsearch, SSR pages, aggressive SEO targets, and constant security pressure.

I thought:

- The database stores data

- Elasticsearch makes search faster

- SSR makes pages visible

- Security is something you patch

Reality was harsher. The database isn't just about storing data, it's a system, searching is like fighting, SEO was a feedback loop, fixing security loopholes will never end unless we put in place an infrastructure to protect from the design stage our website is under attack all the time whether it's from BugCrowd, HackerOne or other open source loopholes every month I didn't fail because I wrote bad code, but I failed because I misunderstood how hostile real systems are. Local AI sharpened a lesson I had already paid for.

Abstractions hide complexity. But complexity does not disappear. It waits.

Before vs After

Before running AI locally:

- Assumed resources were elastic

- Blamed platforms for failures

- Treated constraints as bugs

After:

- Resources are finite

- Failures are information

- Constraints are curriculum

These lessons extend far beyond AI.

They shape how I think about checkout flows, API rate limits, frontend performance, infrastructure contracts — anything that runs under pressure.

Why I Keep Doing It

I do not run AI locally to replace the cloud.

I do it to stay honest.

To remember that:

- Abstractions have a cost

- Performance is contextual

- Systems fail physically

- Understanding the machine still matters

That perspective has made me a better engineer - everywhere else.

If You're Curious

You do not need a data centre.

You do not need the latest GPU.

You just need enough hardware to feel:

- Constraints

- Friction

- Trade-offs

**Start small. **

Run Stable Diffusion locally. Or try a LoRA fine-tune on a 7B model. Push it until it crashes.

That crash is the curriculum.

That is where real learning starts.

Continue in AI