The Bugs That Kept Coming Back

I was hired to build frontends. Then BugCrowd reports started landing in my inbox. What I learned about security when patching stopped working.

I wasn't hired to take care of security. My job was to be the engineer who took care of WalletHub: building the web page, writing the Angular components, and making everything look right. The security part was someone else's job. The researchers kept coming. But then BugCrowd started sending reports to my channel non-stop. Every week there was another XSS report. There were injection vulnerabilities in endpoints I'd forgotten I built, and session auth mechanisms that were broken.

The Flood

WalletHub has an active bug bounty program, security researchers are constantly reviewing, both testing inputs and fuzzing endpoints, and looking for vulnerabilities that no one expected to find. At first, I treated each report like a bug: find it, fix it, move on, but then something strange started to happen. The same type of vulnerability kept coming back. Fixing one issue, like an XSS, would only lead to another appearing elsewhere, much like patching one hole in a boat only to have water leak in from another. Not the same bug twice, but the same type, over and over.

The Wake-Up Call

In the early days of WordPress, we were faced with a vulnerability that affected all WordPress installations at that time, that is, the use of Adobe Flash SWF cross-site scripting, which you should be familiar with, every WordPress website, including our own, was affected. That was the time when security stopped being abstract. This wasn't just one researcher trying to find a vulnerability in my code, but it was a widespread attack that affected millions of websites, and we had to fix it. We patched it, as did everyone else, but the lesson remained: vulnerabilities don't wait for you to be prepared.

Why Patches Didn't Stick

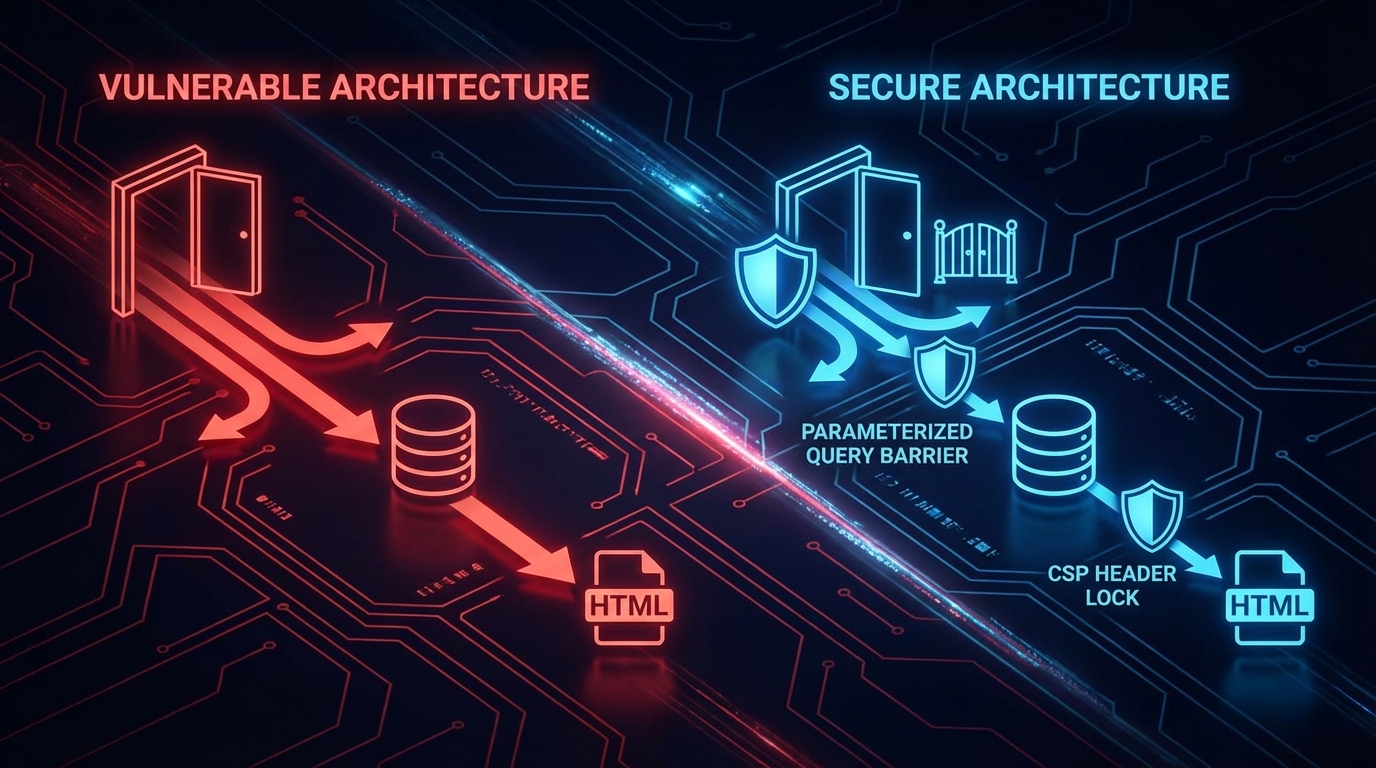

After receiving a report from BugCrowd in just a few months, I stopped seeing these problems as random events and started looking for connections instead. I found that the problems weren't widespread, but they were all rooted in the same thing, that is, the architecture made it easy to build insecure systems, specifically the model we were using, making it way too easy to build vulnerable systems without realizing it. Input flowed from the browser through the backend to the database with barely any change.

What Actually Worked

I stopped solving bugs one by one, but started asking questions from a different angle: how do we make entire classes of bugs impossible? Validate input at the boundary, encode output for context. Input gets checked, rejected or reformatted at the point of entry into the system. Every output gets encoded for where it's going: HTML encoding for HTML, SQL escaping for queries, JSON encoding for JSON not "somewhere in the middleware," but at every boundary Deny by default. APIs expose nothing unless explicitly allowed. Endpoints return the minimum data needed. Permissions are restrictive by default, opened only when there's a documented reason. Content Security Policy headers. Browser-level headers that block entire attack vectors before your code even runs, making most XSS impossible at the browser level.

The Frustrating Truth

The hardest part wasn't building a scanner or writing a content security policy, but rather accepting the fact that most security problems aren't bugs, but design decisions. when your architecture trusts user input by default, every feature you ship is a potential vulnerability, and when your framework makes escaping opt-in instead of opt-out, every template is a risk.

What It Taught Me

I've applied the lessons from WalletHub to every system I've built since then, and what I've learned is reflected through the way I've designed access control into systems that shouldn't fail and how I've thought about security in agentic systems. I think about abuse cases before features. Before building an access system, I'd always ask the question: What if someone did SQL Injection? What if they put scripts down? What if they replay this request 10,000 times? What if they mess with the payload? I design systems for hostile input. This approach isn't paranoia, but pattern recognition learned from seeing the same bugs repeat; it's about building systems that make the wrong thing hard. I don't trust framework abstractions; even when frameworks claim to handle sanitization and CSRF, I verify them myself because gaps eventually appear. I'd base my hypothesis on the worst-case scenario, whether it was user input, API responses, webhook payloads, or database records - nothing is trusted until it's proven valid.

If You're Patching the Same Bugs

Stop patching. Start asking why the architecture keeps producing these bugs.

The answer is usually one of three things:

- Trust boundaries are in the wrong place

- The default behavior is insecure

- Security is opt-in instead of opt-out

Fix those, and the BugCrowd reports slow down. Not because researchers stop looking. Just because there's less to find.